Will AI make bad doctors better and good doctors worse?

And what we can learn from how doctors use non-traditional approaches

Last week we talked about the jagged edges of healthcare technology and how AI might help smooth some of those out. The same paper that discussed the concept of the jagged edges of AI also looked at human performance when using AI:

“Initial studies show AI improves the performance of low performers. AI is great at some things and terrible at others. How humans decide which tasks to allocate to AI is important. Experience helps. “Within this growing frontier, AI can complement or even displace human work; outside of the frontier, AI output is inaccurate, less useful, and degrades human performance.”

Their paper suggests that people who “discern which tasks are best suited for human intervention and which can be efficiently managed by AI”, ie, figuring out where the ‘invisible wall’ of AI capabilities lies, have the best overall performance.

The study also pointed out that people who used AI did better at most tasks. They looked at an elite consulting firm, BCG, and scored performance on 18 common tasks done by junior-level consultants. I was a consultant at Bain before medical school, and I am sure these were all very smart people who can generally figure out things like prompt engineering.

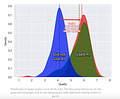

However, it should be noted that the better-performing group (the red group on the graph below) received training on how to optimally use GPT-4, which is likely an important component of many of these “human uplift” studies. This finding implies that physicians who are trained to use AI tools will - surprise! - use them more effectively. I’m not particularly hopeful about hospitals paying to train clinicians on AI tools, but it seems clearly beneficial.

Can AI make every doctor above average?

But to me, the most important figure in the study is the one that shows that inside the “invisible wall”, ie when performing tasks that AI is good at, “consultants who scored the worst when we assessed them at the start of the experiment had the biggest jump in their performance, 43%, when they got to use AI. The top consultants still got a boost, but less of one.”

This finding has incredible implications for medicine. Imagine if every physician were at least at the current average, rather than half being below average. A greater percentage of skillful physicians could markedly improve the health of the population and decrease morbidity and mortality. We might not see some of the ridiculous decisions from other physicians that every doctor can rattle off quickly. (For example, this week I got a note from a primary care provider about a patient going for hip surgery with thrombocytopenia and no workup who described the low platelets as “probably fine”.)

One representative study of physician practice variation looked at variation in practice guideline adherence for 6 common clinical scenarios and found marked differences between the first quartile (dark blue) and the bottom quartile (confusingly colored light blue) for C-sections for low-risk pregnancies and bronchodilator prescription for COPD, for example. These percentages correspond to real patients who likely get unnecessary surgery or have more frequent COPD exacerbations. Imagine if all the patients got care comparable to the top half of clinicians below.

Additionally, AI might help even the best doctors avoid some cognitive biases, which are very common, like anchoring bias. These could improve decision making and outcomes as well.

What’s more, AI may allow us to delegate some more complex tasks to providers with less formal training, though the technology is definitely not there yet and it will require careful studies to demonstrate safety.

Will high-quality AI make good doctors worse?

Another paper makes a really thought-provoking observation about AI’s effect on human performance: More experienced people workers performed better with lower quality AI and worse with higher quality AI. They tended to override the lower quality AI when it gave them incomplete or incorrect information, and “fall asleep at the wheel” with the higher quality AI. This finding overlaps with concerns that high-quality AI will lead to cognitive shortcuts and deskilling for future physicians.

This paper comes with a lot of caveats about education related to AI use, human learning and feedback, and the complexity of human-AI interaction that I expect to see many more studies about in coming years. Because many aspects of AI are a continuation of the progress of knowledge tools in medicine, I think we can take some comfort in evidence that physicians currently discern when to use certain tools like traditional versus complementary medicine.

Lessons from how doctors use traditional vs complementary medicine at the jagged edge of healthcare

The best doctors I know discern which tasks are best suited for traditional approaches and which need alternative approaches, whether it’s “alternative medicine” or just borrowing a technique from a different field of medicine. Modern medicine is great at some things (appendicitis, genetic oncology profiling, constipation) and terrible at others (back pain, autoimmune diseases, equitable care). Conceptually, an incredibly common disorder like back pain shouldn’t be that much harder than a similarly common disorder like constipation. However, any primary care doctor will tell you that back pain is hard to treat effectively, while almost everyone can have their constipation resolved in a few days.

In the same way, discerning the tasks that AI is great at (summarizing long documents, finding specific pieces of information within documents, answering questions) and terrible at (specific citation references, decisions with little data, judgment) comes with training and using the tools over time and developing an intuitive sense of when it’s actually helpful.

As they move through medical school and residency, good doctors begin to intuit where the “invisible wall of healthcare” lies. They realize when interventions are almost always successful and when they aren’t. They often delegate to non-traditional approaches when traditional medicine frequently fails their patients. When a patient has a condition that traditional medicine is bad at like back pain, the best doctors I know suggest ‘complementary’ approaches much more frequently. For example, 50% of doctors in one study recommended massage or chiropractic/osteopathic manipulation for back pain in the past year. Although the study didn’t ask, I’m guessing that the percentage that recommended alternative treatments for appendicitis, fractures, or a GI bleed would be close to zero.

According to one meta analysis, other conditions for which doctors tend to recommend complementary approaches include disorders traditional medicine is often bad at:

Depression

Insomnia

Headache

Stomach or intestinal problem

In addition to those conditions, I’d add:

Immune conditions

Smoking cessation

Infertility

Weight loss

Although no one has ever explained the concept of a “jagged frontier of healthcare” to me or any other doctor, it’s encouraging that physicians naturally adapt to tools with feedback about what that tool does well. They avoid approaches that don’t work, whether it’s asking AI to do math or high-dose opioids for back pain, both of which usually give terrible results. The way physicians and all clinicians navigate this jagged edge of healthcare daily gives me hope that with experience, we will learn to use AI for the things it’s good at, and avoid or ignore otherwise. Hopefully we’ll all provide better care for our patients as we navigate this new frontier.