How to use AI for medical research and writing

It's the best and worst collaborator you'll ever have

When we think of great scientific research, we think of Newton and his apple, Curie coining the term radioactivity, and Einstein using elevators to determine relativity. We don’t think of all the tedium that is involved in modern-day research.

I’m going to assume you’ve never thought, “Oh great, now I get to format my references!” When I first started to write academic papers, the amount of tedium shocked me. First there’s the process of sifting through a bunch of papers that are only tangentially associated with what you’re actually interested in. Then there’s the formal literature review and synthesis. At the back end, there are even more painful activities. Collecting and formatting references is generally a terrible process despite how much better reference managers have gotten over the past 15 years. If you don’t have a reference manager, putting the references into a format that the journal will accept can be incredibly time-consuming. And revising - especially when you think you’re done with the writing process - can be the most aggravating of all.

A recent survey of 1,600 researchers shows considerable optimism about incorporating AI into research. Especially amongst early adopters -those who are using AI in research currently - more than 80% thought AI would be essential, very useful, or useful in research. Interestingly, amongst those who weren’t current users that number was less than 60%, suggesting that the more people used AI for their work the more useful they thought it would be.

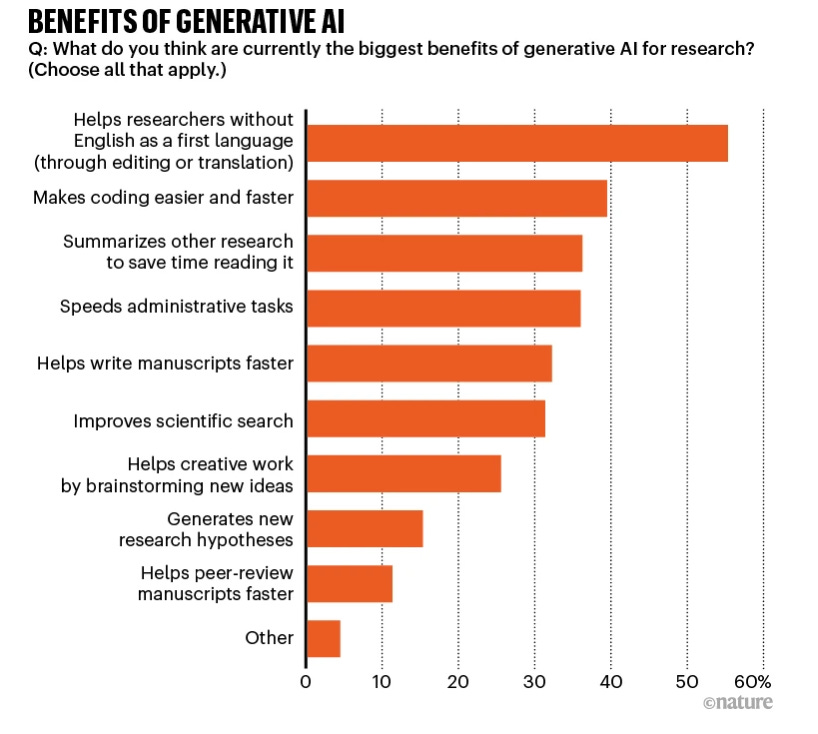

Additionally, researchers felt some of the biggest benefits of generative AI would be related to some of the most tedious tasks. Aside from translation and editing for researchers whose first language was not English, about 30% of respondents listed help with literature search, manuscript writing, and summarizing other research papers as activities with the most possible benefit. These tasks require relatively little cognitive power and are often farmed out to the most junior person on the team, so it makes sense that these would be prime candidates for AI use cases.

Let’s break down each of these research tasks and the tools that would be most helpful, from first steps through the finished product.

Brainstorm new ideas and generate new research hypotheses

One study pitted undergraduates against ChatGPT-4 in an annual startup innovation contest, and showed that the AI platform generated more and better ideas than the undergraduates. Moreover, the judges were more likely to invest in the ideas of the AI model, and only 4 of the top 40 business ideas came from the undergraduates. Does this mean that LLMs have better ideas than humans and we should just ask ChatGPT-4 to come up with our next research idea? Not at all. Another study found that the feasibility and impact of business ideas from GPT-4 were superior to those from a sample of humans, but the humans had more novel ideas. Similarly, a study of humans using GPT-3.5 came up with more interesting writing topics than either humans or GPT alone. These results are more or less what you’d expect: the AI models use what they know to come up with ideas, while humans are often better at coming up with totally new ideas, and the combination of the two is better than either alone.

You can use this knowledge to your advantage when coming up with research proposals. Asking the AI tool for similar hypotheses, or questions that challenge your hypothesis, may provide a different perspective and force you to improve your question, method, or approach. One interesting aspect of similar studies is that the most creative people are often helped the least by AI tools, while the least creative get a noticeable bump. While no one likes to be thought of as uncreative with research ideas, we all have days when our creativity just doesn’t seem to be flowing as naturally. AI tools may be especially helpful on those days.

Improve scientific search

The current models of general purpose LLMs are frankly terrible at scientific literature searches. I have spent more hours than I care to admit looking up references to events the LLMs referred to that didn’t happen, full citations that were completely fabricated, and studies that were never done. If you want to do a literature search, do not use one of those tools. However, there are a new batch of fee-based AI platforms that specialize in searching, analyzing, and summarizing research. Elicit and Scite are commonly mentioned, and there will likely be more options in the near future. These tools can also search for common themes within the papers or topics you identify.

Summarizing other research

I like to think I’m a fast reader, but compared to an LLM I’m basically a toddler. Generative AI doesn’t have to read line by line, so you can give it a long scientific manuscript and it will do an amazingly good job of telling you the most important three points. You can use one of the research-specific tools above or use a general purpose LLM. If you want to upload manuscripts for an LLM to summarize, you’ll likely need to pay for access to ChatGPT, Claude, or a similar tool. There are even ways to integrate these summaries into a Notion database so you can keep track of all the studies you come across in one place.

Write manuscripts and grant applications faster

I enjoy writing but dread scientific writing. The tone and passive voice bore me, and trying to get all the information into a predefined format can feel monotonous. LLMs are great at taking bullet points and notes and turning them into full paragraphs in a specific format. You can jot down the important points you want to make and even upload a graph or image and ask the LLM to write the corresponding parts of your manuscript. It’s unlikely you’ll want to use the first draft you get back, but editing is almost always easier than staring at a blank page. Background and introduction sections are easy places to start with LLMs, but they can also format a collection of steps into a cohesive methods section with the right kinds of prompts.

Translation

Translating and refining text for non-native English speakers can be a huge benefit as well. LLMs got their start in the world of translation, and they are generally very good. One study points out that though only 7% of the world’s population are native English speakers, 75% of scientific publications are in English. Hopefully these LLM tools will allow more scientists from non-English speaking areas to contribute to the literature and increase its diversity of topics and perspectives.

Grant applications

Grant applications maintain their formats across updates and cycles so are great candidates to provide a generative AI tool with information and ask it to format the relevant information into specific sections. This can cut the tedium of reformatting and copying and pasting every time you have to update a funder.

Critique manuscripts

I use LLMs daily to critique what I write. I ask the AI tools for five positives and five areas for improvement for my text, and find it enormously helpful to have immediate feedback. If you have a particular concern, you can ask the LLM to comment specifically on narrative flow, tone, or readability. You can also ask the LLM to pretend it’s an editor of a medical journal and to give five reasons to accept or reject your manuscript, then adjust accordingly. For example, in a recent manuscript the AI tool advised changing the order of certain sections to improve flow and flagged redundant phrasing and some dense technical jargon to simplify for readability.

Citations

If you’re of a certain age, you remember the days before citation managers like Zotero, when you had to manually change the style of references every time you submitted a paper to a different journal. Although we’ve thankfully come a long way since then, integrating citations into the other tools and avoiding yet another platform is a huge benefit. Some of the tools mentioned above also screen the quality of your citations to ensure you’re using reputable sources.

Concerns about using generative AI for scientific research

When polled about concerns about AI, though, many of the hopeful uses are also what concern researchers. More than 60% of researchers were worried about bias in literature searches and plagiarism-related issues. These concerns likely represent the researchers’ assumption that most people will use AI for these less desirable tasks, as well as their concern about the technology itself having insufficient guardrails to prevent these kinds of issues.

When my co-author and I submitted our paper, we received a notice saying that two paragraphs were “lighting up” as AI-generated and would need to be rewritten, despite them being written by us. We weren’t sure if we should be insulted that we wrote like a robot or pleased that our writing was so fluid. As other authors have pointed out, these plagiarism detection tools are far from perfect and have many false positives, but we rewrote the sections anyway.

As many new AI tools appear to aid in these tasks, I worry that these kinds of issues will actually add to the tedium for researchers - proving that a computer did not complete part of a task for you will be more of a burden than the original task itself. Fortunately, forward-thinking publishers realize that use of LLMs for scientific writing are a tool like many we use in medicine and research, and support their use with appropriate acknowledgement.

Publishers must distinguish what the true contributions are in the manuscript-writing process. The thinking, synthesis, experimental design, and writing can be aided but not performed solely by an AI tool. No one seems to think that physicians not typing their own notes means that they are not thinking through a patient visit, for example. Perhaps by being clear that the contribution to science is about the idea and research and not the related tedious tasks, AI could free up more physicians to pursue the activities they’re interested in - new ideas and solving problems.