What are healthcare AI startups doing now to evaluate for safety?

Watching President Reagan's prediction come true for healthcare AI

Last week we talked about patient safety in AI being more than just one dimensional, and that a variety of approaches are likely needed in the ecosystem to figure out what kinds of tests make sense for specific tools, patients, and settings.

This got me thinking about what healthcare AI startups are doing now to evaluate their products for patient safety.

As a first pass test, I searched for safety information from six of the top patient care-related healthcare AI start-ups that raised money last year according to the Medical Futurist.

As an aside, it was hard for me to figure out what several of these companies actually do. Their websites left me very confident that they do something that involves a lot of business buzzwords strung together, like this description from Enclitic:

‘[Our software] “standardizes, protects, integrates, and analyzes data to create the foundation of a real-world evidence database that improves clinical workflows, increases efficiencies, and expands capacity” and gives healthcare professionals “unprecedented insight into the wealth of information residing within their archives, enabling more informed decision-making and improved patient outcomes.”’

Or this vision statement from Tempus:

“Empower every individual and every healthcare organization on the planet to unlock their healthcare data for improved clinical, operational and financial outcomes.”

It would be hard to convey less actual information with any of those statements. The challenge of understanding these companies’ purpose underscored for me how much healthcare AI sales relies on actual salespeople to get in front of decision-makers and explain these products. The implication, then, is that these companies have mostly direct customer interaction, likely with large hospital systems, and therefore opportunities to ask these hospital systems what kind of evidence they want or need to see.

Demand- vs supply-side voluntary commitments

In a splashy press release in September, the government announced that the major US-based AI companies made voluntary commitments toward AI safety. These “supply side” commitments came directly from the companies creating the technology. In a less publicized move in December, the government announced voluntary commitments for healthcare companies that paralleled those from big foundation model companies. However, these were “demand side”, meaning that it was large hospital systems that committed to these principles, not the healthcare AI companies themselves.

The health systems committed to developing solutions that advance health equity, expand access to care, make care affordable, coordinate care to improve outcomes, reduce clinician burnout, and otherwise improve the experience of patients.

Speaking of famous supply-side enthusiasts, I thought this quote from President Reagan could apply to where we are in the healthcare AI lifecycle:

"Government's view of the economy could be summed up in a few short phrases: If it moves, tax it. If it keeps moving, regulate it. And if it stops moving, subsidize it."

Government regulation is being developed as healthcare AI moves forward, just as Reagan predicted.

Patient Safety Assessments by Company

For each of the following companies, I searched their websites for information related to patient safety, including studies they had performed and personnel on the site specficially noted to be safety-focused. This is by no means an exhaustive research effort, and some of these companies may be doing work that is not listed publicly, or have employees devoted to safety who are not listed on their websites.

Hippocratic AI

Hippocratic AI is a leader in testing their AI system in a systematic way, likely helped by their $120M in fundraising. Their motto is “The First Safety Focused LLM for Healthcare”. They even have a tab on their website specifically labeled “Safety”.

The company has what they call a constellation model (what I could call an agentic model) called Polaris, which can respond to patient queries about clinical and administrative issues.

To test their product, they followed an approach that mirrors clinical trial testing with three phases of clinical testing:

Phase 1 - fewer than 50 nurses/doctors tested the product

Phase 2 - 1000 tested the product

Phase 3 - health systems plus 500 docs and 5000 nurses test the product (in process)

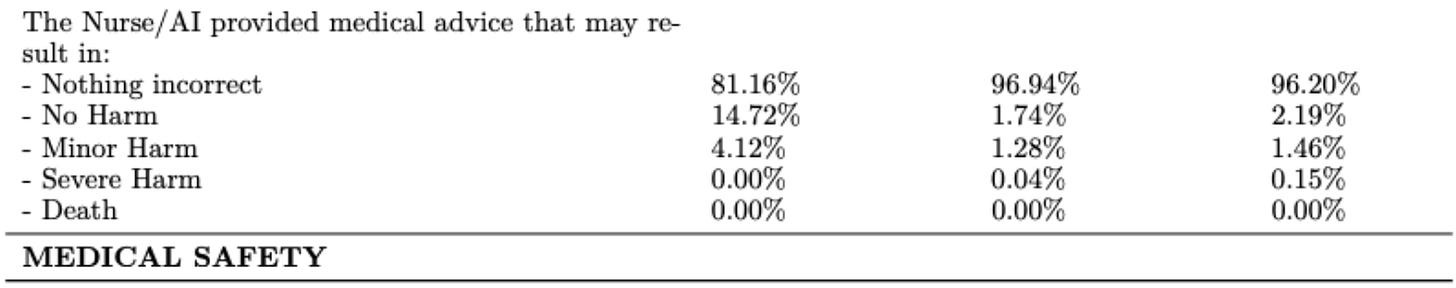

Hippocratic AI published their Phase 2 findings earlier this year, which showed their AI agent performed similarly to human nurses for triage in terms of safety.

Importantly, their study evaluated not just whether the advice could cause harm, but also aspects specific to their product like conversational quality. This multi-layered evaluation underscores that there is unlikely to be a one-size-fits-all evaluation for all healthcare AI products.

Corti AI

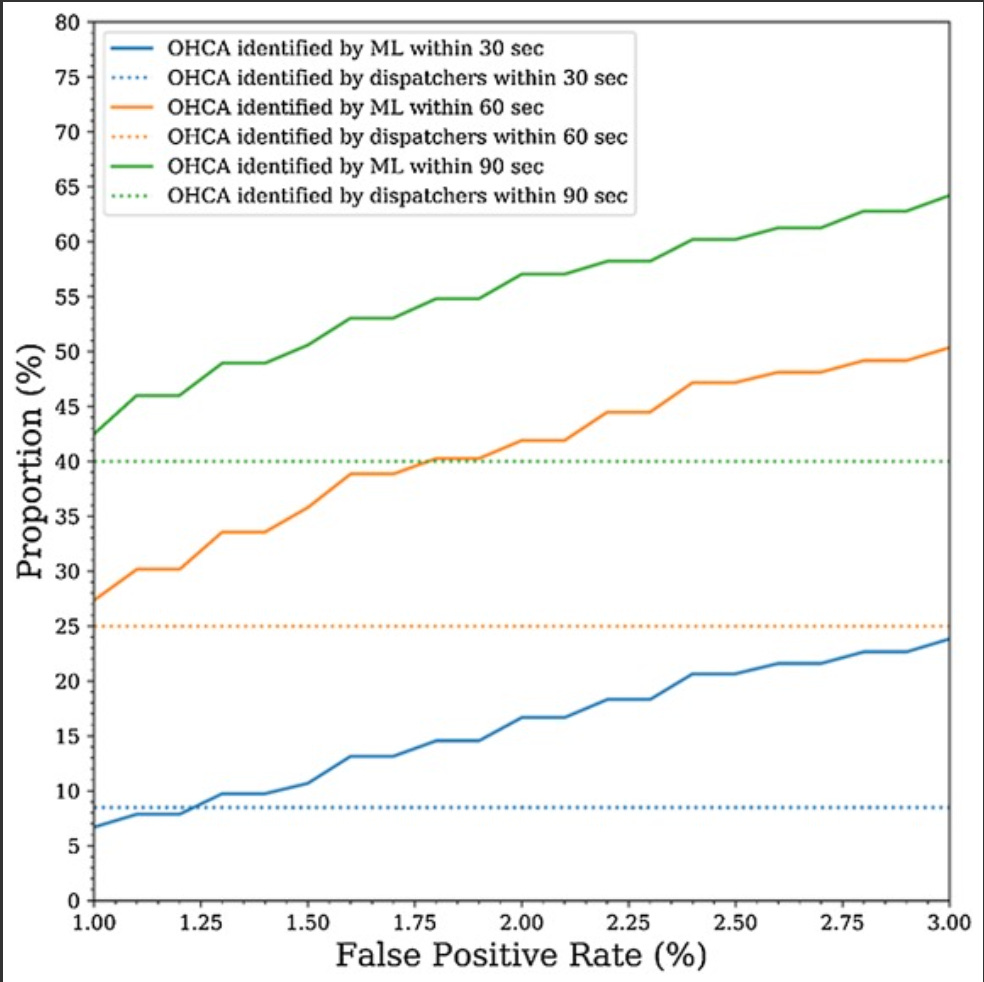

Corti is another company that has actual evidence to support safety. They trained a model on “thousands of hours of real patient calls and consultations”, and published a study in Resuscitation showing that the model identified out of hospital cardiac arrests more quickly than dispatchers.

Enclitic

Enclitic standardizes and de-identifies medical imaging data, plus puts the right studies in radiologists’ baskets. They have no safety studies. Some sample studies that would be helpful include: the statistical likelihood of re-identification from their de-identification program, the clinical safety of their data standardization.

Ada

Ada uses online symptom checkers to suggest diagnoses and triage patients. It has several peer-reviewed studies that demonstrate efficacy and they even have a quality officer and a physician who is the VP of Medical Safety. Some of their studies specifically use the word “safety”: “Real-world hospital ED study of 378 patients, comparing the safety of Ada’s urgency advice to that of the Manchester Triage System (MTS). Ada demonstrated a high rate of safety (94.7%) over all medical specialties of the Emergency Department”

CloudMedX

CloudMedX does data integration and normalization amongst different siloed databases for health systems. For example, they do “rapid risk stratification to identify high-risk patients early and automated care coordination”. There’s no mention of safety on their site, though any product that mentions risk stratification naturally follows that they should be doing evaluations for bias.

Tempus

Tempus integrates “natural language understanding (NLU) and healthcare specific fine-tuned LLM models” focused on “building the first version of a platform capable of ingesting real time healthcare data in an effort to personalize diagnostics.” They have many, many publications that use their database, though it doesn’t address issues like bias, for example.

Why don’t more healthcare AI companies evaluate their products?

Expense. Clearly mirroring the three phases of clinical trials in evaluating healthcare AI model is extraordinarily expensive. But somehow startup drug and device manufacturers manage to provide this kind of data and testing even at relatively early stages.

Demand. The Biden administration published voluntary commitments related to healthcare AI that follow on the voluntary commitments by the main foundation model developers. However, instead of commitments by the healthcare AI developers, these are commitments by the demand-side of the equation, the healthcare systems. If the demand side doesn’t really care about safety evaluations of healthcare AI products, these kinds of studies won’t ever get traction.

Requirements. There are no current requirements for healthcare AI companies to make their evaluations public (if they’re performing any privately).

It’s really complicated. Surprise! Evaluating AI systems is complicated, and evaluating anything in healthcare is complicated.

Summary

Right now we’re at the beginning of healthcare AI regulation for patient safety, which means requirements for demonstrating patient safety are minimal to non-existent unless it qualifies as Software as a Medical Device. There are some evolving best practices for what this means, but as President Reagan predicted, there will be more regulation moving forward. As physicians, it’s crucial that we are active participants in creating meaningful regulations related to healthcare AI and patient safety.